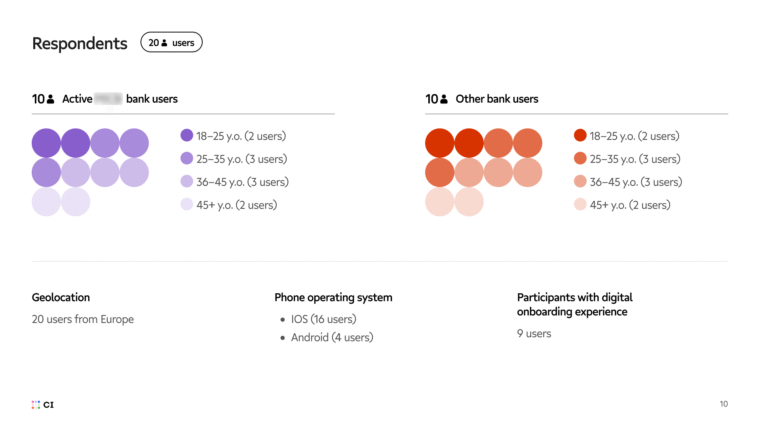

The project focused on identifying usability gaps across the client’s mobile app and demonstrating how structured user research can support product evolution in the long run. It combined deep-dive moderated sessions with large-scale unmoderated testing to provide a clear, evidence-based view of how customers interact with key banking features.

About the Client

A fast-growing European bank approached us to explore ways to continuously improve its mobile banking experience. The app already had a strong steady growth, an expanding user base, positive feedback in app stores, and solid performance metrics.

However, the team had reached a point where analytics and internal product hypotheses were no longer enough. They wanted to learn directly from real users where friction really occurs in their digital user journeys, and build internal user research expertise for long-term improvement.

The bank approached us not only to conduct a comprehensive usability evaluation but also to learn Craft Innovations’ research methods and practices, so that they could later build their own user research processes and culture.

Project Goals

Together, we outlined several objectives:

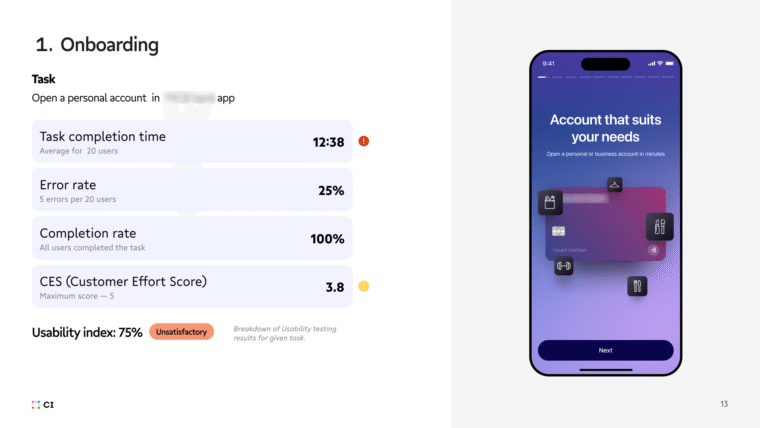

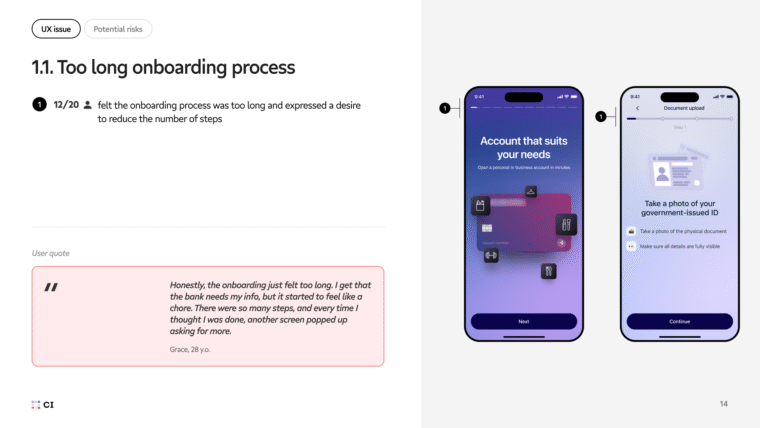

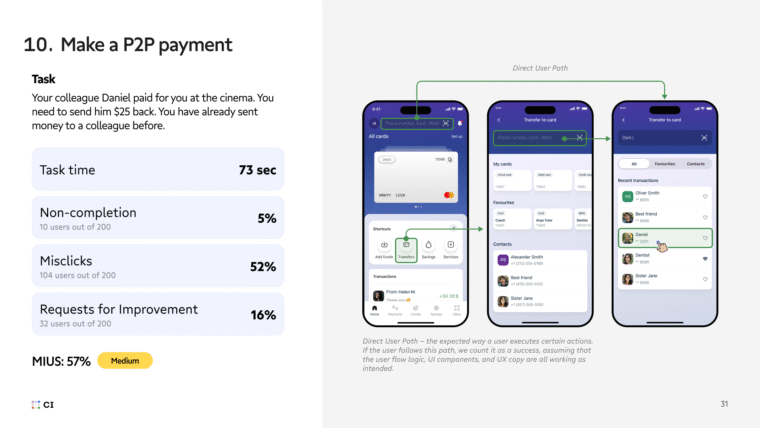

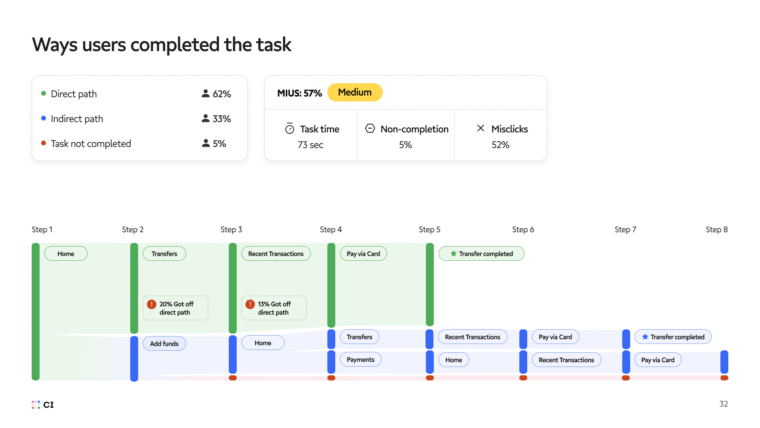

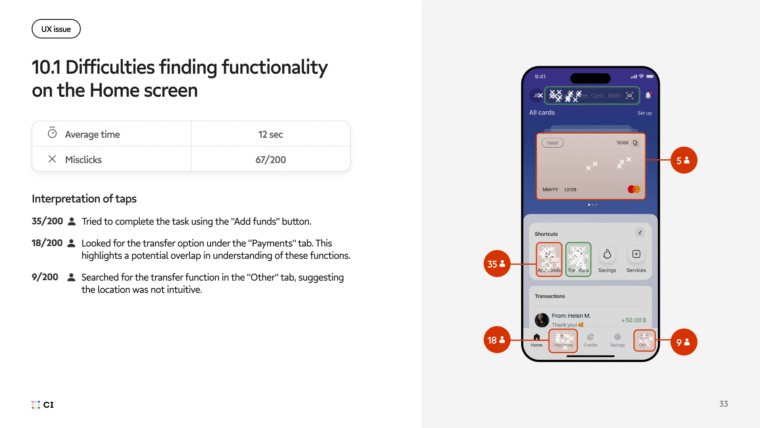

- Identify usability gaps across critical user flows – onboarding, payments, card management, and daily banking – through both moderated and unmoderated testing.

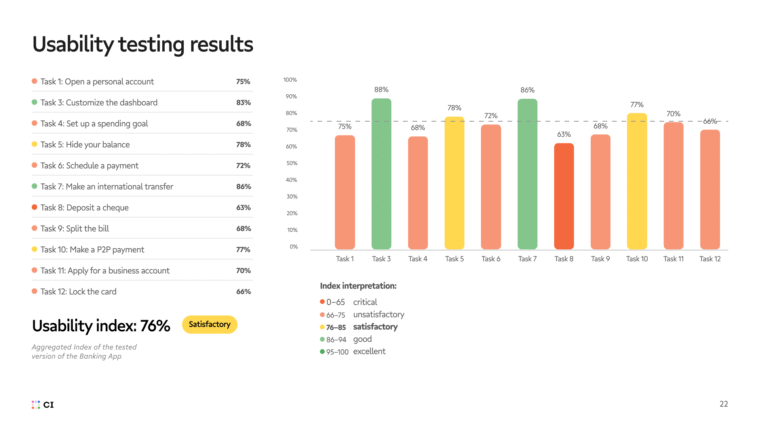

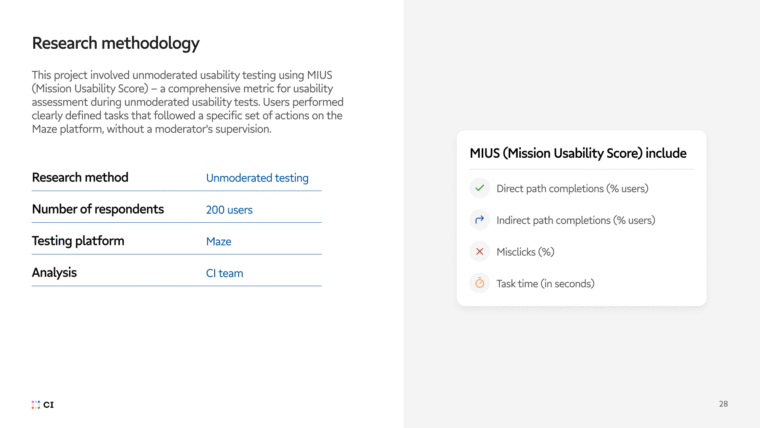

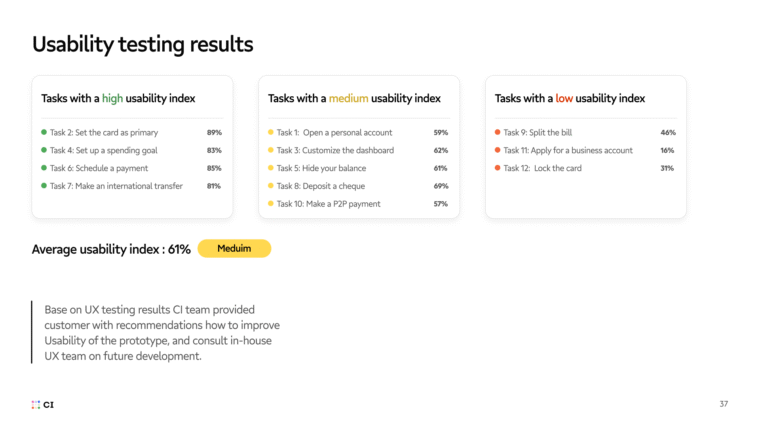

- Evaluate the client’s app using UX metrics, such as SUM (Single Usability Metric) and MIUS (Mission Usability Score).

- Provide clear recommendations on how to address identified UX gaps and improve the overall product experience.

- Demonstrate how usability testing works in practice – from planning and recruitment to synthesis and recommendations – and deliver a clear roadmap for internal UX research adoption.

- Educate the client’s product and design teams on how to interpret findings, prioritize fixes, and integrate UX research into their workflow.

Challenges

The main challenge was not something critical in the app itself, but the lack of visibility into user behavior. The product performed well, yet subtle issues – like unclear microcopy, missing context during KYC, inconsistent navigation, or friction in card-related actions – had gone unnoticed.

Internally, the client had no established user research practice. Designers and product owners relied on analytics, support feedback, and assumptions. The project needed to prove that user testing isn’t just “nice to have” – it’s a measurable tool for better business decisions.

Another layer of complexity was timing: the client wanted to conduct a large-scale evaluation without delaying other product initiatives. This required a solid research setup that was both comprehensive and easy to replicate later – and, at the same time, able demonstrate its business value to the wider team.